General Discussion

Related: Editorials & Other Articles, Issue Forums, Alliance Forums, Region ForumsFrom boom to burst, the AI bubble is only heading in one direction (The Guardian)

Good commentary on this from John Naughton (see https://en.wikipedia.org/wiki/John_Naughton ):

https://www.theguardian.com/commentisfree/2024/apr/13/from-boom-to-burst-the-ai-bubble-is-only-heading-in-one-direction

First, displacement. That’s easy: it was ChatGPT wot dunnit. When it appeared on 30 November 2022, the world went, well, apeshit. So, everybody realised, this was what all the muttering surrounding AI was about! And people were bewitched by the discovery that you could converse with a machine and it would talk (well, write) back to you in coherent sentences. It was like the moment in the spring of 1993 when people saw Mosaic, the first proper web browser, and suddenly the penny dropped: so this was what that “internet” thingy was for. And then Netscape had its initial public offering in August 1995, when the stock went stratospheric and the first internet bubble started to inflate.

Second stage: boom. The launch of ChatGPT revealed that all the big tech companies had actually been playing with this AI stuff for years but had been too scared to tell the world because of the technology’s intrinsic flakiness. Once OpenAI, ChatGPT’s maker, had let the cat out of the bag, though, fomo (fear of missing out) ruled. And there was alarm because the other companies realised that Microsoft had stolen a march on them by quietly investing in OpenAI and in so doing had gained privileged access to the powerful GPT-4 large multimodal model. Satya Nadella, the Microsoft boss, incautiously let slip that his intention had been to make Google “dance”. If that indeed was his plan, it worked: Google, which had thought of itself as a leader in machine learning, released its Bard chatbot before it was ready and retreated amid hoots of derision.

-snip-

The third stage of the cycle – euphoria – is the one we’re now in. Caution has been thrown to the winds and ostensibly rational companies are gambling colossal amounts of money on AI. Sam Altman, the boss of OpenAI, started talking about raising $7tn from Middle Eastern petrostates for a big push that would create AGI (artificial general intelligence). He’s also hedging his bets by teaming up with Microsoft to spend $100bn on building the Stargate supercomputer. All this seems to be based on an article of faith; namely, that all that is needed to create superintelligent machines is (a) infinitely more data and (b) infinitely more computing power. And the strange thing is that at the moment the world seems to be taking these fantasies at face value.

-snip-

Which brings us to stage four of the cycle: profit-taking. This is where canny operators spot that the process is becoming unhinged and start to get out before the bubble bursts. Since nobody is making real money yet from AI except those that build the hardware, there are precious few profits to take, save perhaps for those who own shares in Nvidia or Apple, Amazon, Meta, Microsoft and Alphabet (nee Google). This generative AI turns out to be great at spending money, but not at producing returns on investment.

-snip-

The 5th stage is panic when the bubble bursts.

Naughton asks at the end of the final paragraph: "Are we caught in an AI bubble? Is the pope a Catholic?"

My thoughts:

1) The sooner this bubble bursts, the better. Generative AI isn't just causing harm. It's diverted way too much capital, talent, and resources to flawed tech which is unlikely ever to be reliable enough to deliver on promises made by AI proponents.

2) As a society, we're going to need a 6th phase - to clean up after generative AI.

Because so much AI trash is already out there.

AI-created websites pumping out nearly endless amounts of misinformation.

AI-generated scientific papers, sometimes "peer-reviewed" by AI, are adding more and more errors to scientific literature every day.

Image platforms are rapidly being overwhelmed by AI-generated fake photos and art. Yesterday I saw a tweet from someone who wanted to use stock images in a book he's writing, but does not want AI images, who was told by all the stock image platforms he checked with that none of them could guarantee him that images he'd pick wouldn't be AI. I also read that a large fraction of the AI images on Adobe - and nearly half of the fantasy images - are already AI. Many kids are also giving up on learning to create art themselves because they feel they can't compete with a tsunami of AI-generated images.

Publishing platforms have been overwhelmed by AI-generated books.

Music platforms are being swamped by AI-generated music that allows people to pretend they're creative by giving brief text prompts to AI that's obviously illegally using vast amounts of copyrighted material because with music (as with images) you can get near-exact replicas of copyrighted work just using adjectives that have been used to describe them rather than the artists' names.

Teachers are having a harder and harder time discovering if their students are actually learning anything because cheating via AI has become much more widespread than cheating without AI was in the past. One estimate, from a Scottish university if I recall correctly, was that it's 4 times worse now, and taking up more and more teacher and administrative staff time to deal with.

We don't yet have such unhelpful medical AI as chatbots using GenAI, which hallucinates, and which will be marketed as $9/hr replacements for nurses, but those are in the works.

The companies pushing GenAI on us have been well aware that their AI tools are unreliable. Many of them have crafted TOS designed to make individual AI users responsible for any harm done by their tools, including violations of copyright. You don't expect search engines and business tools to give inaccurate/imaginary answers? The companies tell you that it's on you to catch all their flawed tools' failures...and oh, you should also view the inaccurate results as "creative" rather than wrong. When it first became obvious Google's Bard got things wrong, it was relabeled as a "creative companion" and marketed as that. Marketing problem solved, or so some of the top people there thought, but a lot of Google employees were reportedly unhappy with their company's willingness to peddle a flawed product.

Basically, GenAI companies have slimed the world.

Yesterday I saw a person who's an AI enthusiast admit that we'll have to assume now that anything created since a couple of years ago has been polluted by AI. I saw AI critics referring to pollution by AI already over a year ago, but it was a shock to see an enthusiast talk about its effect as harmful.

And unless we want to live in a world where more and more of what we see and hear is untrustworthy, we're going to have to clean up after the mess AI has already made. The AI companies didn't give us tools to identify what's produced by AI, and apparently never intended to. The intent was always to fool people.

Yesterday Microsoft announced a new AI to create video from a single image of a face. I guess they thought we didn't have enough deepfakes yet.

It can do this:

Link to tweet

it can also do this:

Link to tweet

Tommy Carcetti

(43,189 posts)...one of the candidates (a Moms for Liberty type) openly admitted asking ChatGPT what the qualifications were for being a school board member.

She was justifiably roasted.

bucolic_frolic

(43,250 posts)It just keeps repeating, the same facial moves repeat (esp, when she looks to her left).

Not worried. Corporations and health care will find a way to bill it at $348 an hour.

Bernardo de La Paz

(49,032 posts)Deal with it.

There will be no collapse and cleanup of "AI". It is here to stay.

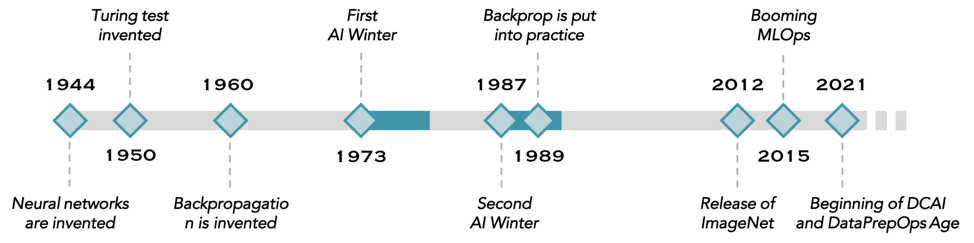

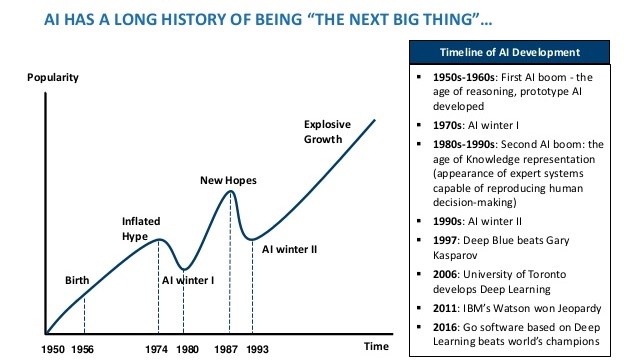

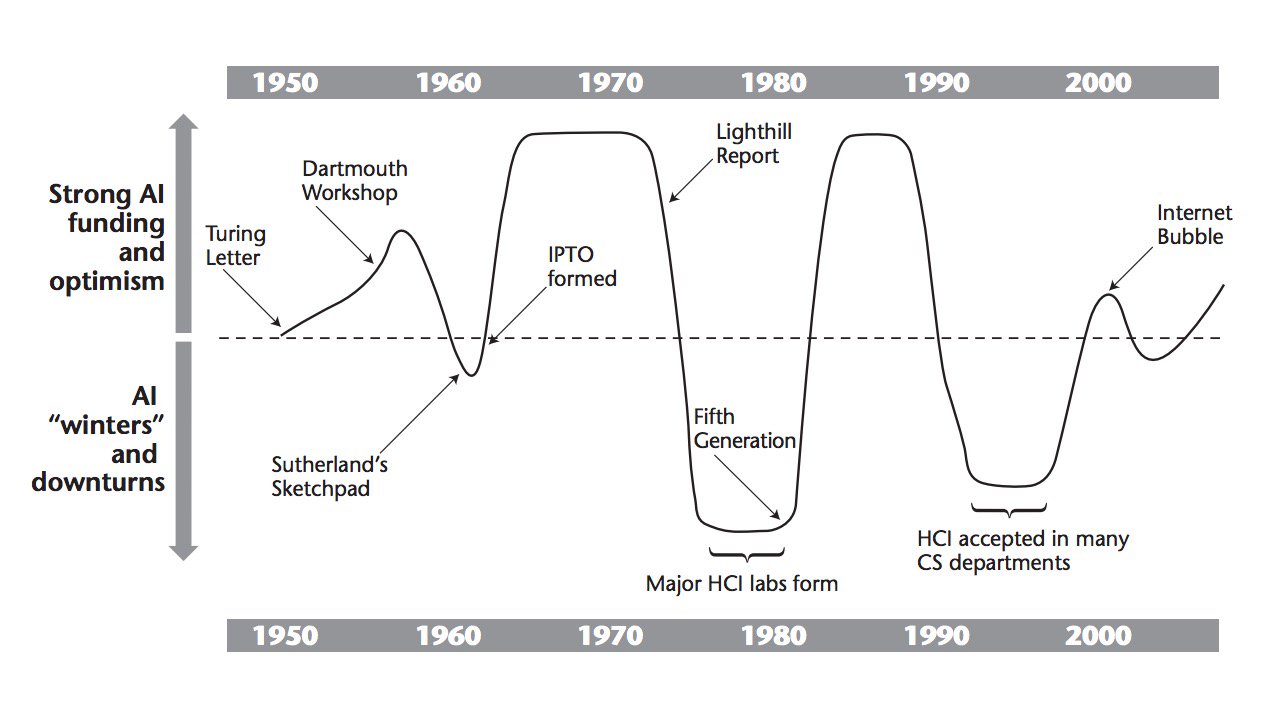

There will be some collapse among stocks in AI, and some drying up of funds. This has happened before in AI. It is called an "AI winter". Winter is coming. But those who think the winter will be the end of AI are lacking insight.

If we posit an AI collapse of some kind in 2025 and an AI winter for five years after, then investing for the long term in 2026, 2027, and 2028 could be productive (but nobody knows). Notice how backpropagation began to be deployed in the first AI winter.

It would not surprise me if any third AI winter is briefer and shallower. Synergistic effects start to kick in at some point.

Scrivener7

(50,989 posts)getagrip_already

(14,816 posts)And when he passes, there will be another.

Scrivener7

(50,989 posts)the AI baby when we had the chance.

But it's already too late.

Yavin4

(35,445 posts)A lot of people wrote off the internet as a fad. Decades later, our entire economy revolves around it. We couldn't have gotten through the pandemic without it. Retail, transportation, education, entertainment, journalism have all been changed dramatically because of it.

AI is on a similar path. It's not taking hold at the pace promised for the same reason why the internet didn't take hold early on, institutional resistance. Most corporations are run by petty bureaucrats who do not want to yield power to new tech.