General Discussion

Related: Editorials & Other Articles, Issue Forums, Alliance Forums, Region ForumsDelta Meltdown

So, one of the best run, profitable, and on-time airlines built its own internal computing system to track flights, issue tickets, handle scheduling, billing, and more.

It is a complex, robust, effective computer system, far better than the 1890's technology that Southwest Air has been trying to replace.

Can someone explain to me how a power failure in Atlanta caused the airline to collapse? Even my home office computer has a backup power system, letting me work even if the office is getting no power. Do they really expect us to believe that with all its programing and computer successes, Delta did not have a backup power system set up to prevent such a meltdown?

Agschmid

(28,749 posts)AngryAmish

(25,704 posts)Massacure

(7,526 posts)ChairmanAgnostic

(28,017 posts)Ask any SW worker and they will agree that their system HAD to be built in the 1890s. ![]()

Agschmid

(28,749 posts)JHB

(37,163 posts)Of course, it's prone to meltdowns.

DustyJoe

(849 posts)I was IT director for one of the largest commuter airlines for 12 years with hubs coast to coast. The HQ where the primary server was located had redundant power, redundant data storage, even a redundant server,but the bottleneck was the data carrier. One time a fiber cut 150 miles away cut the data lifeline and a days outage resulted. The data carrier had no redundant links to the server. Nowdays the use of satellite, fiber, landline, wireless makes that type of loss rare, but in the 80's-90's wasn't available. You can have a backup generator in place, but if your data carrier does not, you are looking at your adequately powered system displaying 'no network access'. Murphy is alive and well in the IT world.

zipplewrath

(16,646 posts)The press reported once that it was a "power switch" that was being tested and failed. If it was the "power switch" that controlled where the power came from in the first place (including any alternates/backups) that could easily have been an unrealized single point failure.

I guess I was a tad surprised that in this day of "clouds" that the systems aren't a big more distributed than this. But I guess that just goes with the fact that the systems are old and resistant to change.

Massacure

(7,526 posts)I work in IT. At my previous employment, a company with about four billion dollars in revenue, one of my lunch buddies was the data center supervisor and so I got a little bit of insight into this company's Business Continuity Plan from an IT perspective.

This company had two data centers. The primary one was in the basement of the corporate office. The secondary one was located in a building they leased several miles away. This particular company held disaster recovery exercises about every six months. It didn't matter how bumpy the exercises went, the Chief Information Officer always declared them a success much to the chagrin of the people actually executing it.

There was a thunderstorm one summer that knocked out power to a good portion of the city. The data centers have what is called a UPS, an Uninterruptible Power Supply. It's basically a huge set of batteries that automatically power the data center for a period of time after grid power is lost and while the generator is coming online. During this thunderstorm a switch of some sort in that UPS failed, and the data center went dark. It happened so quickly they couldn't even cut over to the secondary data center. The company I was working for at the time was pretty pissed off at the vendor responsible for maintaining the UPS equipment.

I cannot speak to what happened in Delta's case. But to answer your question, Delta probably did have a backup system of some sort, and their means of cutting over to it probably failed just as spectacularly as their primary systems. It's unfortunate, but it happens.

Suburban Warrior

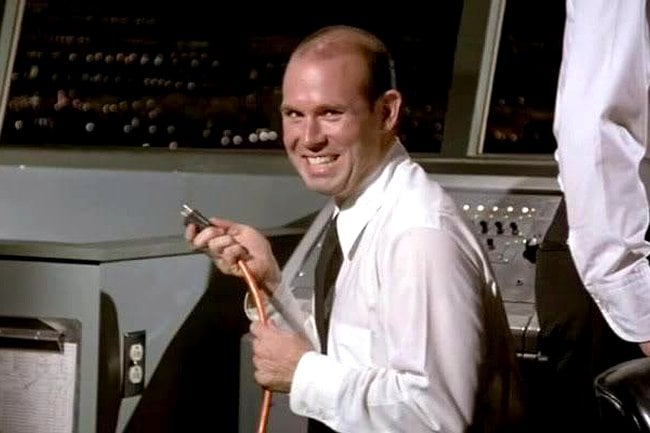

(405 posts)[link: ?x=1200&y=794|

?x=1200&y=794|

trotsky

(49,533 posts)Great reference.

smirkymonkey

(63,221 posts)I love that movie! ![]()

sarcasmo

(23,968 posts)Hortensis

(58,785 posts)when I heard just how big this was.

MohRokTah

(15,429 posts)Take it from me, almost no company is capable of running a full fledged high availability data center themselves. Most companies, Delta included, would be better off outsourcing to several hosting organizations strategically located globally. You can insource the actual management of the systems and software, but you outsource the hosting and hands on requirements. This minimizes risk due to power failure etc. because the hosting facility hosts so many customers they can afford state of the art generators and backup power systems for the entire facility.

MineralMan

(146,338 posts)on the impact of a catastrophic data outage like this. When they weighed the cost of enough redundancy to prevent it, though, they calculated that it would cost them less to just eat the loss when it happened. Corporations are always doing stuff like that to justify taking chances on things. So, how much did this crash cost Delta? I'm betting it cost less than setting up and maintaining a fully redundant system.

That's my guess.

Skittles

(153,226 posts)"Supposedly one of the 59 older Cisco servers had a "soft" hardware failure, which did not send a fail message. Eventually they took individual servers off line one at a time and found the problem."